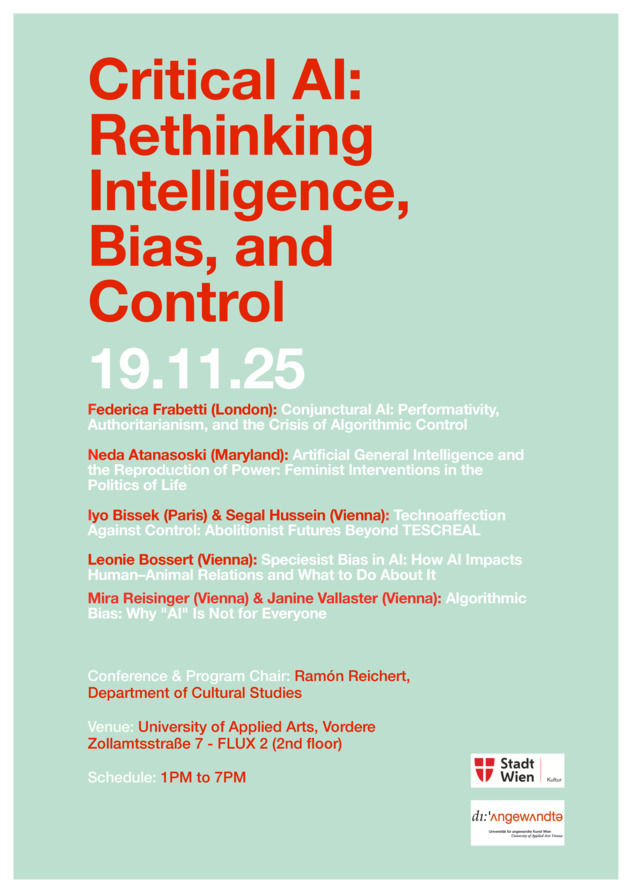

Critical AI: Rethinking Intelligence, Bias, and Control

- International Conference

Organisers/Management

Date

- 19 November 2025 13:00–19:00 Universität für angewandte Kunst in Wien, Wien, Österreich (Flux 2, VZA7)

Keywords

Discussion, Electronic Media, Talk, Cultural Politics, Internet Art, Media Art

Text

The conference "Critical AI: Rethinking Intelligence, Bias, and Control" investigates artificial intelligence as a cultural and design-driven phenomenon, foregrounding the ways in which agency is conceptualized, structured, and operationalized through AI systems. Rather than approaching AI as a neutral or autonomous technology, the event explores how design practices, software architectures, and human-machine interfaces embed normative assumptions about action, responsibility, and autonomy. Artificial Intelligence can be understood not merely as a technical innovation but as a cultural practice deeply embedded in social, historical, and political contexts. This perspective emphasizes how AI systems are shaped by human values, power relations, and collective imaginaries, reflecting and reinforcing cultural norms and ideologies. Viewing AI as a cultural practice invites critical scrutiny of the ways in which it influences everyday life, knowledge production, and identity formation. Moreover, it foregrounds the role of diverse communities and actors in co-constructing AI technologies, highlighting issues of agency, representation, and ethical responsibility beyond purely technical frameworks. By drawing on interdisciplinary perspectives from media theory, critical AI studies, gender studies, and science and technology studies (STS), the conference addresses AI as a site of cultural production and political negotiation. Contributions will examine how code, interfaces, and infrastructures shape and mediate agency, and how these dynamics reflect broader sociotechnical imaginaries and power relations. The conference seeks to foster transdisciplinary dialogue on the aesthetic, ethical, and political dimensions of AI, and to highlight how artificial intelligence functions not merely as a tool, but as a practice that redefines how agency is distributed, claimed, and contested in contemporary culture. The concepts of AI bias and algorithmic bias (cf. Benjamin, Race After Technology, 2019; Eubanks, Automating Inequality, 2018) describe the structural distortions inherent in machine decision-making processes, such as those arising from discriminatory training datasets or the reproduction of historical prejudices within classification procedures. The conference focuses on a critical interrogation of how AI systems do not merely reflect the world as it is but help reproduce and exacerbate the very structures of inequality and exclusion they claim to transcend. Particular attention will be given to algorithmic data processing within the contexts of platform capitalism, state surveillance, biometric control, and the growing commodification of social practices. To what extent do AI technologies encode categories of social difference - such as ethnicity, race, gender, class, disability, age, and sexual orientation - and how do these mechanisms of representation affect social participation, justice, and epistemic authority? A particular focus of the conference is the critical analysis of key concepts shaping current understandings of AI and digital society: machine learning, dataveillance, datafication, digital ecology, algorithmic bias, and AI bias. These terms denote core processes through which data-driven technologies intervene in social, political, economic, and ecological systems - often under the guise of objective efficiency, yet frequently with opaque and normatively charged effects. Through algorithmic monitoring, predictive analytics, and automated decision-making, AI systems increasingly operate as mechanisms of social control—embedding biases, reinforcing dominant power structures, and producing new forms of visibility and invisibility. By scrutinizing these processes, the conference seeks to uncover the complex interplay between technology, governance, and cultural practices, highlighting how AI-mediated surveillance reshapes not only what is known but also who is made accountable, visible, or marginalized within digital societies. We ask: What forms of knowledge and authority are produced by AI systems? How do algorithmic classifications and predictive models normalize deviance, regulate visibility, and delimit agency? In what ways do these systems extend older regimes of surveillance while introducing new modalities of control? Dataveillance (cf. Browne, Surveillance Futures, 2020; Zuboff, The Age of Surveillance Capitalism, 2019) refers to pervasive monitoring through algorithmic systems deployed by both state and commercial actors. A central theoretical framework underpinning the conference is Critical AI - an interdisciplinary field that scrutinizes algorithmic systems not merely from a technical standpoint but through lenses of power critique, social philosophy, and cultural theory. Critical AI interrogates the institutional, economic, and political contexts in which AI applications emerge and the epistemic and social exclusions they engender (cf. Crawford, Atlas of AI, 2021; Kitchin, The Data Revolution, revised edition, 2021). Similarly, the perspective of Queer AI opens new conceptual spaces beyond binary classificatory logics (cf. Keyes, The Misgendering Machines, 2019; D’Ignazio et al., Queering Data, 2023). It critically addresses normative assumptions of gender, embodiment, identity, and representation inscribed in data models and training sets, while also proposing speculative alternatives to hegemonic AI designs. Another focal point refers to Data Feminism (cf. D’Ignazio & Klein, Data Feminism, 2020) and Feminist AI (cf. Browne et.al, Feminist AI: Critical Perspectives on Algorithms, Data, and Intelligent Machines), an approach that integrates feminist theory, postcolonial studies, social justice, and critical data practices. Data Feminism challenges the illusion of objective data collection and advocates for contextual, participatory, and equitable modes of knowledge production. It foregrounds the visibility of structural inequalities and critically examines how epistemic violence is embedded in data models—through the exclusion, distortion, or homogenization of plural social realities. These approaches are complemented by postcolonial and decolonial perspectives on Race & Technology (cf. Amoore, Cloud Ethics, 2020; Noble, Algorithms of Oppression, revised edition, 2023), which investigate how AI infrastructures perpetuate racialized classifications—for example, in biometric facial recognition, profiling, or algorithmic decision-making within policing and migration systems. The conference also engages with the concept of digital ecology, which analyses the complex entanglements of digital technologies with ecological, affective, and political infrastructures (cf. Parikka, Operational Images, 2023; Gabrys, Program Earth, 2018). This approach moves beyond understanding AI and surveillance as isolated phenomena, instead situating them within complex systems of environmental impact, sensory experience, and socio-political relations. It highlights how digital infrastructures consume natural resources, generate electronic waste, and reshape human and non-human interactions, while also mediating affective and cognitive experiences through data flows and algorithmic logics. In this context, perspectives on open data (cf. Milan & Treré, Big Data from the South(s), 2019) are discussed, emphasizing transparency and democratic participation while critically interrogating global power asymmetries in data production and utilization. By integrating digital ecology into the analysis, the conference foregrounds the interdependence of technological, environmental, and social systems—inviting reflections on sustainability, care, and accountability in the design and deployment of AI. References: Amoore, Louise. Cloud Ethics: Algorithms and the Attributes of Ourselves and Others. Duke University Press, 2020. Benjamin, Ruha. Race After Technology: Abolitionist Tools for the New Jim Code. Polity, 2019. Browne, Jude, Stephen Cave, Eleanor Drage, and Kerry McInerney. (eds). Feminist AI: Critical Perspectives on Algorithms, Data, and Intelligent Machines. Oxford University Press, 2023. Browne, Simone. Surveillance Futures: Social and Ethical Implications of New Technologies. Routledge, 2020. Couldry, Nick & Mejias, Ulises A. The Costs of Connection: How Data Is Colonizing Human Life and Appropriating It for Capitalism. Stanford UP, 2019. Crawford, Kate. Atlas of AI: Power, Politics, and the Planetary Costs of Artificial Intelligence. Yale UP, 2021. D’Ignazio, Catherine & Klein, Lauren F. Data Feminism. MIT Press, 2020. D’Ignazio, Catherine et al. Queering Data: Computational Culture and the Politics of Inclusion. MIT Press, 2023. Eubanks, Virginia. Automating Inequality: How High-Tech Tools Profile, Police, and Punish the Poor. St. Martin’s Press, 2018. Gabrys, Jennifer. Program Earth: Environmental Sensing Technology and the Making of a Computational Planet. University of Minnesota Press, 2018. Keyes, Os. The Misgendering Machines: Trans/HCI Implications of Automatic Gender Recognition. Proceedings of the ACM on Human-Computer Interaction, 2019. Kitchin, Rob. The Data Revolution: Power, Knowledge and Society (2nd ed.). Sage, 2021. Milan, Stefania & Treré, Emiliano. Big Data from the South(s): Beyond Data Universalism. Television & New Media, 2019. Noble, Safiya Umoja. Algorithms of Oppression (Revised ed.). NYU Press, 2023. Parikka, Jussi. Operational Images: From the Visual to the Invisual. University of Minnesota Press, 2023. Zuboff, Shoshana. The Age of Surveillance Capitalism. PublicAffairs, 2019.

Lecturers

Location

Address

- Universität für angewandte Kunst in Wien, Wien, Österreich

- Oskar-Kokoschka-Platz 2

- 1010 Wien

- Österreich

Associated Media Files

Image#1

Image#1 Document#2

Document#2